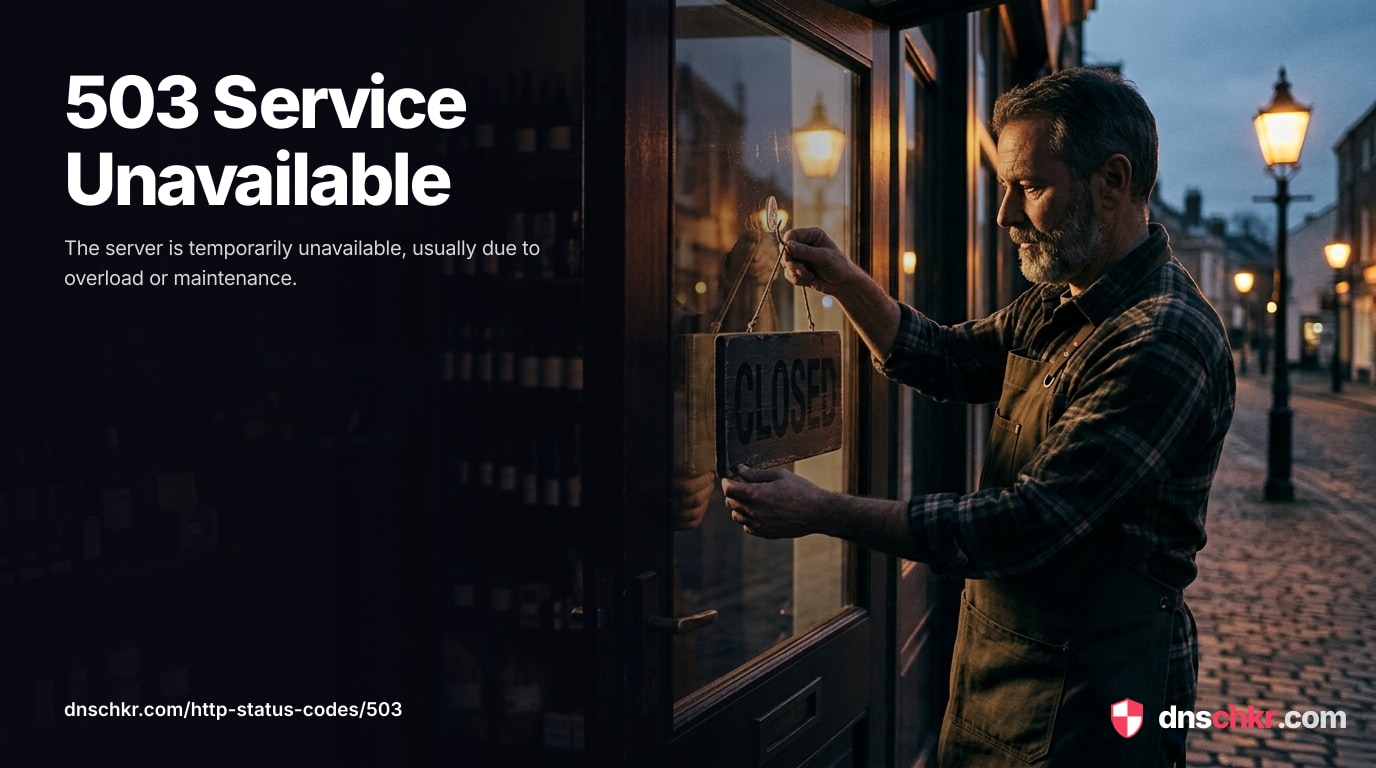

503 Service Unavailable503

HTTP 503 Service Unavailable means the server is alive but temporarily cannot handle the request. Unlike 500 (the application crashed) or 502 (the upstream returned garbage), 503 specifically means the server is aware it cannot serve you right now — usually because it is overloaded, in maintenance mode, or has exhausted a critical resource. The server should include a Retry-After response header indicating when the service is expected to be available again. 503 is often the correct status code for planned downtime during deployments, database migrations, or infrastructure maintenance. It is also what load balancers return when all backend instances are unhealthy or when circuit breakers trip.

What HTTP 503 Looks Like in a Browser

Status

503 Service UnavailableExample

Request

GET /shop HTTP/1.1

Host: www.example.com

User-Agent: Mozilla/5.0

Accept: text/htmlResponse

HTTP/1.1 503 Service Unavailable

Content-Type: text/html; charset=utf-8

Retry-After: 300

Content-Length: 230

<!doctype html>

<html lang="en">

<head>

<title>503 Service Unavailable</title>

</head>

<body>

<h1>Service Unavailable</h1>

<p>The site is temporarily down for maintenance. We will be back shortly. Please try again in a few minutes.</p>

</body>

</html>How to Troubleshoot

1

Check the Retry-After header and status page

The 503 response may include a Retry-After header (seconds to wait or a date). If the service has a status page (status.example.com), check it for incident details and estimated recovery time. If it is a planned maintenance, the response body often includes a maintenance page with a scheduled end time.

curl -v https://example.com/ 2>&1 | grep -i 'retry-after\|maintenance\|503'

2

Check server resource usage (CPU, memory, disk, connections)

Monitor CPU, memory, disk space, and open file descriptors. High CPU means the server is compute-bound; high memory means it may be swapping or at risk of OOM kills; full disk means logs or temp files are consuming all space; too many open files means the file descriptor limit is hit. Any of these can cause 503.

# CPU and memory overview: top -bn1 | head -20 # Disk space: df -h # Open connections and file descriptors: ss -s ls /proc/$(pgrep -f 'nginx|node|php-fpm|gunicorn' | head -1)/fd 2>/dev/null | wc -l # System load average: uptime

3

Check application worker pool status

Verify how many application workers are available versus busy. If all workers are busy, the application cannot accept new requests. For PHP-FPM, check the status page; for Gunicorn/Puma, check process counts; for Node.js, check event loop lag.

# PHP-FPM pool status: curl -s http://127.0.0.1/fpm-status 2>/dev/null || echo 'Enable pm.status_path in php-fpm pool config' # Count PHP-FPM worker processes: ps aux | grep 'php-fpm.*pool' | grep -v grep | wc -l # Count Gunicorn workers: ps aux | grep gunicorn | grep -v grep | wc -l # Nginx upstream connection status: nginx -T 2>/dev/null | grep -A5 'upstream'

4

Check database connection pool

Verify how many database connections are in use versus available. An exhausted connection pool causes all database-dependent requests to fail with 503. Consider adding a connection pooler (PgBouncer for PostgreSQL, ProxySQL for MySQL) to manage connections more efficiently.

# PostgreSQL — check active connections vs limit: psql -c "SELECT count(*) as active, (SELECT setting FROM pg_settings WHERE name='max_connections') as max FROM pg_stat_activity;" # MySQL — check connections: mysql -e "SHOW STATUS LIKE 'Threads_connected'; SHOW VARIABLES LIKE 'max_connections';"

5

Check load balancer health checks

If using a load balancer, verify that backend instances are passing health checks. Check the load balancer's dashboard or logs to see which instances are healthy. If all instances are unhealthy, the shared dependency (database, external API) that the health check relies on may be down.

# Test the health check endpoint manually:

curl -s -o /dev/null -w '%{http_code}' http://127.0.0.1:3000/health

# AWS ALB — check target health:

aws elbv2 describe-target-health --target-group-arn TARGET_GROUP_ARN6

Scale up or add capacity to handle the load

If the server is genuinely overwhelmed by legitimate traffic, you need more capacity. Increase the number of application workers, add more server instances behind the load balancer, enable auto-scaling, add caching (Redis, CDN) to reduce the load on the application, or optimize slow endpoints that consume too many workers. For PHP-FPM, increase pm.max_children; for Nginx, increase worker_connections.

# PHP-FPM — check and increase max children: grep 'pm.max_children' /etc/php/*/fpm/pool.d/www.conf # Nginx — check worker connections: nginx -T 2>/dev/null | grep 'worker_connections'

Common Causes

Server overloaded with too many concurrent requests

highThe server is handling more requests than it can process and is rejecting new ones to protect itself. This happens during traffic spikes (product launch, viral post, marketing campaign), DDoS attacks, or when the application is too slow and requests pile up faster than they complete. Web servers have limits on concurrent connections — Nginx worker_connections, Apache MaxRequestWorkers, or application-level thread/worker limits. When all workers are busy, new requests get 503.

Application worker processes or threads exhausted

highAll available application workers (PHP-FPM children, Gunicorn workers, Puma threads, Node.js cluster workers) are busy processing requests and none are free to handle new ones. This happens when requests take too long due to slow database queries, external API calls that hang, or computationally expensive operations. The worker pool is finite, and when it is full, the application returns 503 for any additional requests. This is different from a total crash — the application is running but at capacity.

Planned maintenance, deployment, or migration in progress

mediumThe application is intentionally taken offline for maintenance — database migration, server updates, deployment of a new version, or infrastructure changes. Many deployment strategies involve a brief 503 window during the switchover. Applications often have a 'maintenance mode' that returns 503 with a friendly maintenance page. This is the correct HTTP status code for planned downtime because it tells search engines the downtime is temporary and not to de-index the pages.

Database connection pool exhausted

mediumThe application has used all available database connections and cannot open new ones. Database connections are a limited resource — PostgreSQL defaults to 100 max connections, MySQL to 151. When all connections are in use and no connection pooler (PgBouncer, ProxySQL) is configured, new requests that need the database cannot be served. This often happens under load when slow queries hold connections open longer than expected.

Load balancer circuit breaker tripped or all backends unhealthy

mediumA load balancer (AWS ALB, HAProxy, Nginx upstream) has marked all backend instances as unhealthy after they failed health checks. This happens when all backend instances crash simultaneously, when a shared dependency (database, external API) fails and causes all health checks to fail, or when the health check endpoint itself has a bug. The load balancer returns 503 because it has no healthy backend to forward the request to.

Related Status Codes

References

| Specification | Section |

|---|---|

| HTTP Semantics | RFC 9110 §15.6.4 |

Frequently Asked Questions

This reference was compiled from official RFCs, protocol specifications, and hands-on troubleshooting experience. AI tools were used primarily for formatting and organizing the content on the page.